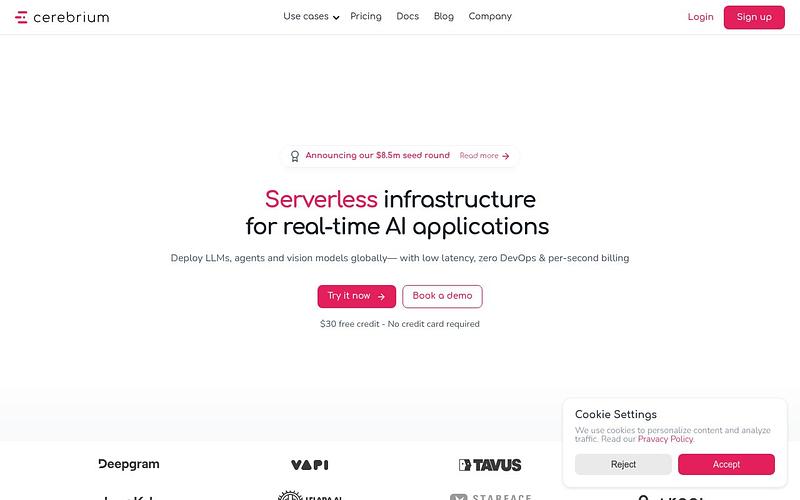

Cerebrium

Serverless GPU infrastructure for deploying AI models with sub-5 second cold starts

Cerebrium is a serverless AI infrastructure platform for deploying machine learning models to GPUs. It supports 10+ GPU types including T4, A10, A100, H100, and H200, with per-second billing so you only pay for actual inference time. Models auto-scale to handle 10K+ requests per minute with sub-5 second cold starts. Deploy using standard Python code with no migration needed, with built-in support for batching, websockets, and ASGI apps. Backed by Y Combinator, used by Tavus, CivitAI, and Twilio.

Pricing: Pay-per-second

Cerebrium Alternatives

Explore 67 products in the Inference APIs category. View all Cerebrium alternatives.

Genesis Cloud

European GPU cloud for AI training and inference powered by 100% green energy

Nebius

Full-stack AI cloud with GPU infrastructure for training and inference

Lambda

GPU cloud for AI training and inference with on-demand and cluster options

CoreWeave

GPU cloud infrastructure built for large-scale AI training and inference workloads

Is your product missing?