SGLang

High-performance open-source serving framework for LLMs and multimodal models

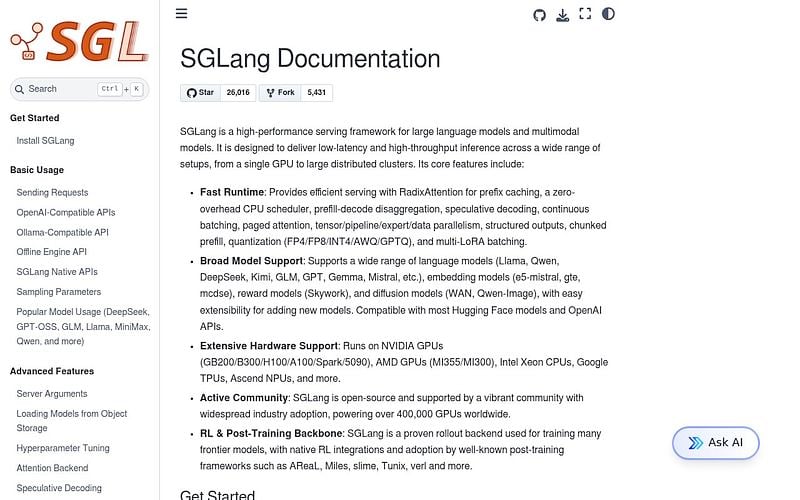

SGLang is an open-source LLM serving framework focused on throughput and structured generation. It pairs a fast runtime with a frontend language for expressing complex prompting patterns (multi-call workflows, structured outputs, tool use) so they execute efficiently on the backend.

The runtime uses RadixAttention to share KV cache across requests with overlapping prefixes, which speeds up multi-turn chat and few-shot prompting. It supports continuous batching, speculative decoding, structured output (constrained JSON), and tensor/pipeline/expert parallelism for large models. Apache 2.0 licensed, Python-based, with an OpenAI-compatible HTTP server.

SGLang is widely used as the rollout backend in RL post-training stacks (AReaL, slime, verl, Tunix) and runs production inference at companies including xAI, LinkedIn, and ByteDance. Their docs report adoption across over 400,000 GPUs worldwide.

SGLang Alternatives

Explore 67 products in the Inference APIs category. View all SGLang alternatives.

Genesis Cloud

European GPU cloud for AI training and inference powered by 100% green energy

Nebius

Full-stack AI cloud with GPU infrastructure for training and inference

Lambda

GPU cloud for AI training and inference with on-demand and cluster options

CoreWeave

GPU cloud infrastructure built for large-scale AI training and inference workloads

Is your product missing?