Lepton

GPU compute marketplace from NVIDIA (formerly Lepton AI). Connects developers to 20+ cloud providers through one interface for training, dev pods, and inference.

Lepton AI was acquired by NVIDIA in April 2025 and relaunched as DGX Cloud Lepton. The platform operates as a GPU compute marketplace connecting developers to capacity from 20+ cloud providers (CoreWeave, Lambda, Nebius, Crusoe, and others) through a single interface. It supports Dev Pods for interactive development with SSH and Jupyter, Batch Jobs for distributed training, and Inference Endpoints with auto-scaling via NVIDIA NIM. There is no publicly documented free tier. The original Lepton AI product, which offered serverless open-source model inference with a developer-friendly API, has been sunset.

Pricing: Monthly subscriptions + usage based

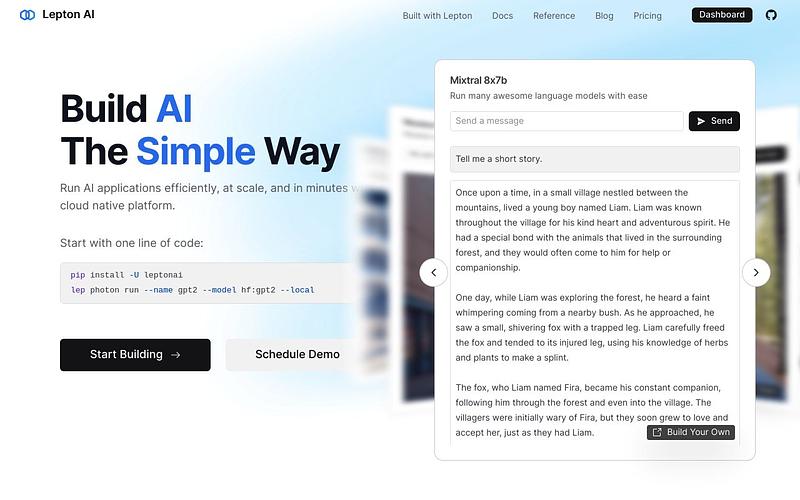

What is Lepton AI?

Lepton AI was a serverless inference platform that let developers run open-source AI models with a simple API. In April 2025, NVIDIA acquired Lepton AI and relaunched it as NVIDIA DGX Cloud Lepton in May 2025.

What is DGX Cloud Lepton?

DGX Cloud Lepton is a GPU compute marketplace that connects developers to capacity from 20+ cloud providers (CoreWeave, Lambda, Nebius, Crusoe, and others) through a single unified interface. NVIDIA describes it as "ridesharing for AI," aggregating available GPU resources across partners so developers can find and use compute without managing multiple cloud accounts.

Key Features

The platform supports three workload types: Dev Pods for interactive development with SSH and Jupyter access, Batch Jobs for distributed training, and Inference Endpoints for model deployment with auto-scaling via NVIDIA NIM microservices. Developers can switch between cloud providers without rearchitecting, and the platform supports data sovereignty by allowing workloads to run in specific regions.

Pricing

Pricing is marketplace-based. Individual cloud providers set their own rates for GPU capacity, with options for on-demand or reserved compute. There is no publicly documented free tier. NVIDIA offers up to $100,000 in credits to eligible VC portfolio companies through partner programs with Accel, Elaia, Partech, and Sofinnova Partners.

Who Should Use It

DGX Cloud Lepton is aimed at teams that need GPU compute across multiple providers without the overhead of managing separate cloud accounts. It is a different product from the original Lepton AI, which focused on serverless inference with a developer-friendly API and free tier.

Lepton Alternatives

Explore 67 products in the Inference APIs category. View all Lepton alternatives.

Packet.ai

On-demand NVIDIA Blackwell GPU cloud with per-second billing, SSH, CLI, and an OpenAI-compatible inference API

Is your product missing?