Galileo

AI evaluation and observability platform with hallucination detection and real-time guardrails

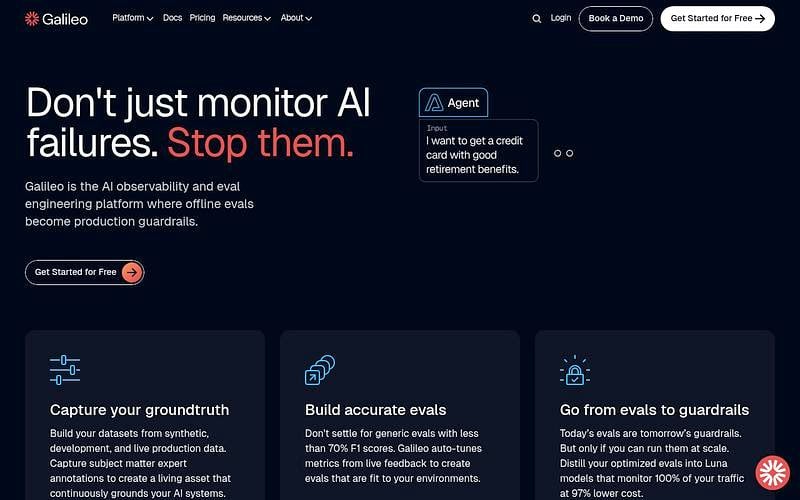

Galileo is an AI observability and evaluation platform for GenAI applications and agents. It offers offline evaluation with 20+ built-in metrics, production monitoring with custom dashboards, and real-time guardrails powered by proprietary Luna small language models. Luna models replace expensive LLM-as-judge evaluators with sub-200ms latency and 97% cost reduction. Integrates with CrewAI, LangGraph, OpenAI Agent SDK, LlamaIndex, and Amazon Strands. Also publishes the Hallucination Index, a public benchmark ranking LLMs by hallucination rates.

Pricing: Monthly subscriptions

Galileo Alternatives

Explore 32 products in the Observability & Analytics category. View all Galileo alternatives.

Future AGI

Open-source platform for testing, monitoring, and improving AI agents with tracing, evals, guardrails, and gateway

Sentrial

Production monitoring for AI agents with automated failure detection and diagnosis

Agenta

Open-source prompt management, evaluation, and observability for LLM apps

Is your product missing?