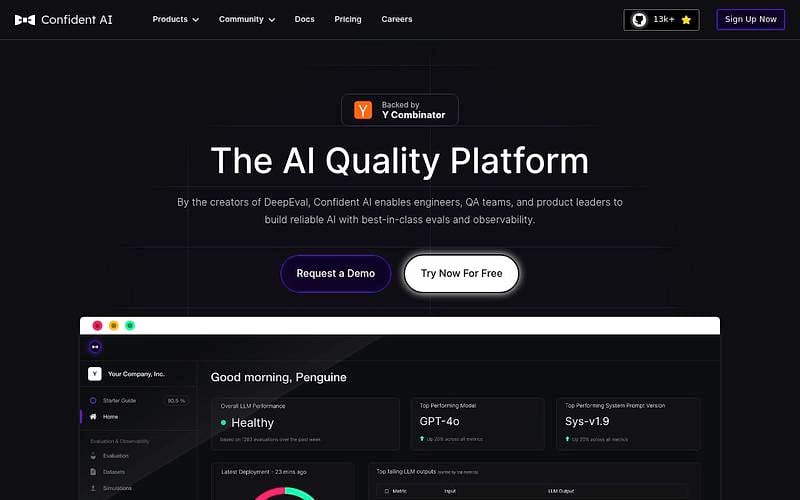

DeepEval

Open-source LLM evaluation framework with 50+ metrics for testing agents, RAG, and chatbots

DeepEval is an open-source evaluation framework for LLM applications that works like Pytest but specialized for unit testing LLM outputs. It provides 50+ research-backed evaluation metrics including G-Eval, relevance, factual consistency, bias, and toxicity detection. Covers AI agents, RAG pipelines, and chatbots with support for synthetic dataset generation, red teaming, and CI/CD integration. Confident AI is the commercial platform layer adding collaboration, visualization, production tracing, and observability. 3M+ monthly downloads.

Pricing: Free / monthly subscriptions

DeepEval Alternatives

Explore 32 products in the Observability & Analytics category. View all DeepEval alternatives.

Future AGI

Open-source platform for testing, monitoring, and improving AI agents with tracing, evals, guardrails, and gateway

Sentrial

Production monitoring for AI agents with automated failure detection and diagnosis

Agenta

Open-source prompt management, evaluation, and observability for LLM apps

Is your product missing?