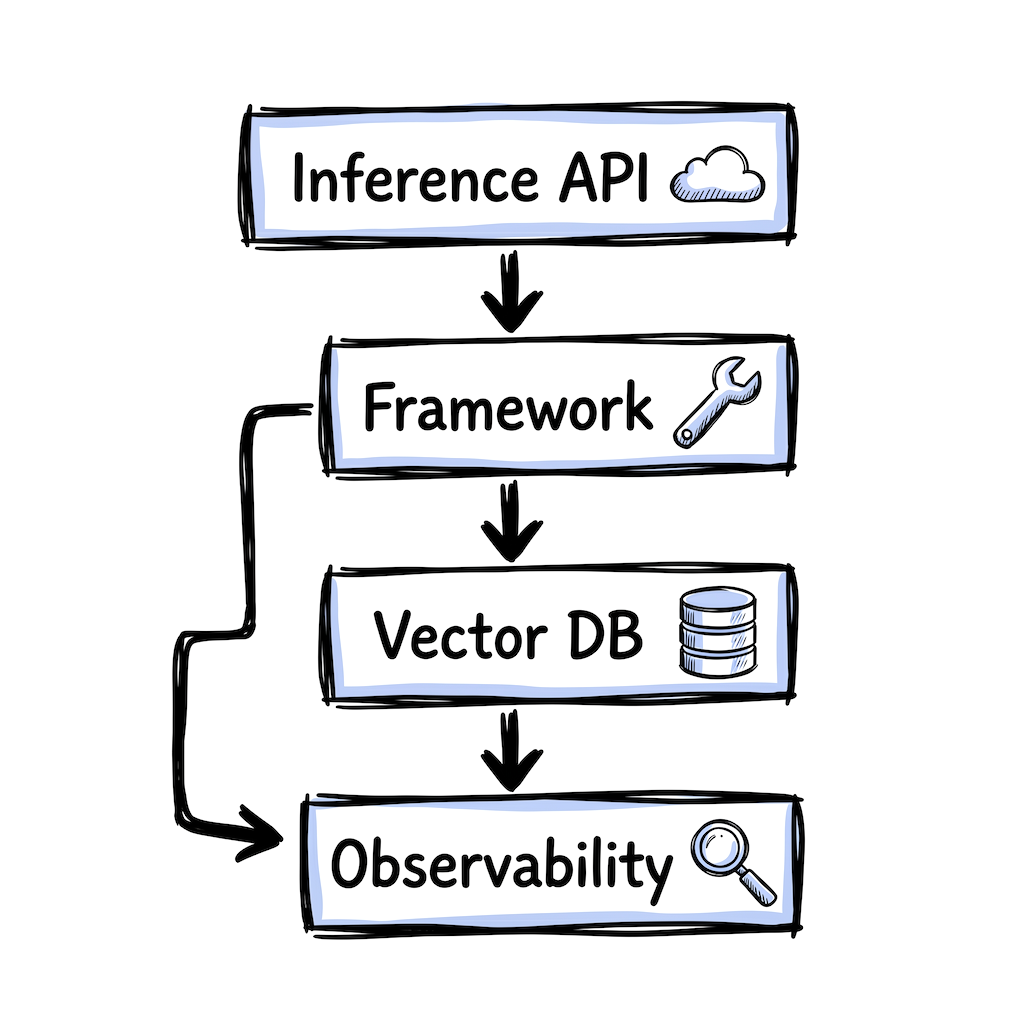

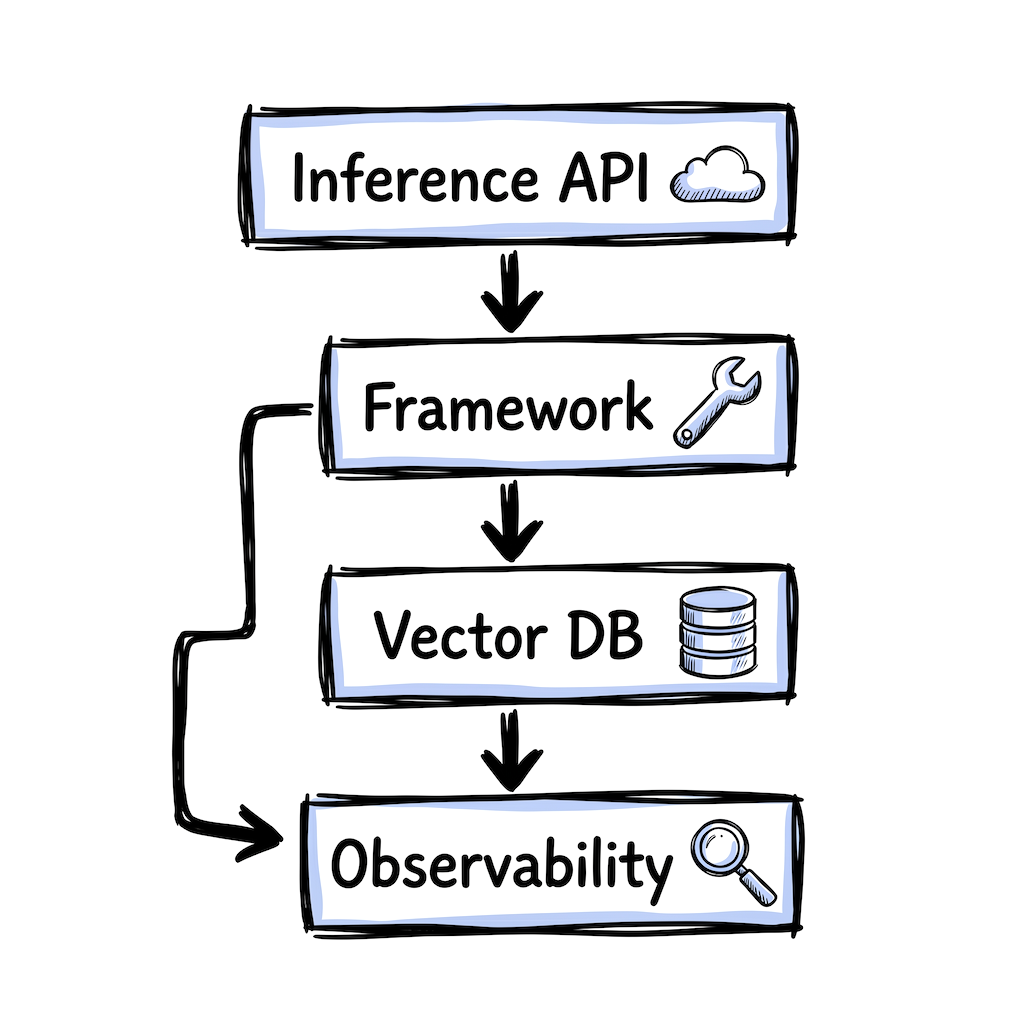

AI Infrastructure Stack

Indie & Early Startup Stack

For solo developers and small teams building chatbots, document Q&A tools, or AI-powered features. Free tiers, low cost, fast to ship.

Inference API

☁️The model endpoint your app calls. All three options below use the OpenAI-compatible API format, which means you can swap providers by changing a base URL and API key. That makes early decisions low-risk.

Free tier, no credit card. Very low latency on custom LPU hardware, good for chat UIs. Smaller model catalog, higher per-token cost than DeepInfra.

Free tier, 1M tokens/day. High throughput on open-source models. Useful for agentic workflows with many sequential calls. No fine-tuning.

Consistently low per-token pricing. Wide model catalog. Good for production workloads like batch summarization or embedding generation.

Framework (maybe)

🔧For a chatbot or single-model integration, the provider's SDK (OpenAI, Anthropic) can be enough. A framework helps when you need RAG, multi-step agents, or streaming into a web UI.

Type-safe agents from the Pydantic team. Minimal learning curve if you use FastAPI. Good for tools that call APIs and return structured data.

Natural fit for React and Next.js. Handles streaming responses into your UI. 25+ provider integrations. Focused on web app AI features.

Specifically for RAG: answering questions over PDFs, docs, or a knowledge base. 160+ data connectors. Less relevant outside document retrieval.

Vector Database

🗄️Only needed for RAG or semantic search. Stores embeddings and finds relevant context for your prompts. Many developers skip a dedicated database and use pgvector in their existing Postgres.

If you already run Postgres, start here. Vectors live next to your app data, no syncing. Handles millions of vectors. Limits past ~10M or advanced filtering.

Dedicated vector DB for when pgvector is not enough. Fast filtered search. Open source with a managed free tier.

Fastest path to a working prototype. Runs in-process, no server needed. Good for hackathons. Most teams migrate to pgvector or Qdrant for production.

Observability

🔍See what your LLM calls are doing: prompts, responses, latency, cost. Without this, debugging a bad answer means guessing. Structured logging works to start, a dedicated tool helps once you iterate on prompt quality.

Most popular open-source LLM observability. Framework-agnostic (Pydantic AI, Vercel AI SDK, raw OpenAI SDK). Free tier, 50K events/month. MIT-licensed.

Open source, built on OpenTelemetry. Plugs into existing Datadog or Grafana setups rather than adding a new dashboard.

Stronger on evaluation than tracing. Useful once you ship LLM features regularly and need to catch quality regressions. No seat-based pricing.

Things to keep in mind

- Start small. A chatbot can ship with just an inference API and the provider's SDK. Add layers as you hit real limitations, not because a guide told you to.

- Free tiers change. Check the provider's pricing page before building on one.

- If something feels wrong after a few weeks, switch. The tools here are designed to be replaceable.

- This stack is a starting point, not a prescription. The best stack is the one that ships.

Frequently asked questions

What AI tools do indie developers need to get started?

At minimum, an inference API (like Groq, Cerebras, or DeepInfra) and the provider's SDK. Add a vector database when you need RAG, a framework when complexity demands it, and observability when real users rely on it.

Can you build AI features without a framework like LangChain?

Yes. The OpenAI and Anthropic SDKs now handle tool calling, structured output, and conversation state natively. A framework helps when you need RAG pipelines, multi-step agents, or streaming into a web UI, but many teams ship without one.

What is the cheapest way to run AI inference?

Groq and Cerebras offer free tiers with no credit card required. DeepInfra has consistently low per-token pricing for production workloads. All three use OpenAI-compatible APIs, so switching between them is a URL change.

Do I need a vector database for my AI project?

Only if you are building RAG (retrieval-augmented generation) or semantic search. If you already run Postgres, pgvector is a good starting point. Otherwise, Qdrant offers a managed free tier.

Last updated: April 2026

Is your product missing?